A term, a movement, and a manifesto for AI filmmaking by Andrew “Oyl” Miller.

Whether you’ve spent 15 minutes or 15 years in the trenches of advertising, you know the exact weight of a 100-page deck. You know the sound of a client’s legal team slowly killing the best idea in the room and filing down the edge just to be safe. You know the calendar math: concept approved in Q1, cameras rolling in Q3, spot airing by Q4, assuming nothing dies in the room, or any corporate restructuring happens between now and then.

Our entire industry was architected around one load-bearing bottleneck: Permission.

Permission to access capital. Permission to hire a crew. Permission from a CMO, a legal team, a regional brand manager, and a nervous account director to turn a script into something that actually lives in the world.

I am here to tell you Permission is Over (If You Want It).

Welcome to Post-Permission Cinema.

Defining Post-Permission Cinema

Post-Permission Cinema is the filmmaking reality we now live in. A moment in creative history where generative AI has completely vaporized the friction between conceptualization and execution.

It is the ability for a single creator, or a small team moving in a singular fashion, to write, direct, and ship broadcast-quality narrative work without requiring traditional gatekeepers, institutional funding, or the logistical weight of a traditional production.

It’s scary and raw. There are no fall-backs. Accountability is out in the open.

In the Post-Permission Cinema era, the barrier to entry is no longer budget. It is no longer accessible. It is no longer who you know inside a network, studio, or holding company.

The only barrier left is vision, taste, and the willingness to execute.

I coined this term because the industry needed one. We have been describing pieces of this shift in word tags and technical jargon: “AI filmmaking,” “synthetic media,” “generative content.” But we haven’t yet zoomed out and named it in the larger historical rupture those pieces add up to. Post-Permission Cinema is that rupture. It is a named era, the way we name the French New Wave or the birth of digital non-linear editing. Something permanent has changed. The least we can do is call it what it is.

The Four Tenets of Post-Permission Cinema

Operating in this new reality requires a complete rewiring of how we think about creative production. These are the core tenets:

1. Execution Kills the Pitch Deck

Nobody wants to scan another generic mood board with the same tired references that have been passed down from Tumblr to Pinterest to TikTok, and smuggled away in art directors’ messy desktops. When the tools exist to generate high-fidelity cinematic renders in minutes, when you can go from a script idea to a locked cut in a weekend, writing a deck about what a film might look like is a waste of everyone’s time, including yours.

In Post-Permission Cinema, the pitch is the pilot. You no longer ask a client or an executive to imagine the final product. You drop the final product in an email. The new starting point is the rough-cut. Pixar style. Let’s judge the details right after we have the script. We are flattening time and exposing taste, or lack thereof.

I’ve spent years seeing teams put together the best pitch decks and treatments in the business. Beautifully typeset. Full reference imagery. High taste references and GIFs. Careful strategic framing. I’m proud of that craft. And I am telling you, as someone who lived inside that system, that those decks were always a substitute for the thing we actually wanted to make. Now we can just make the thing.

Execution is the only currency that matters now. No more hiding behind borrowed references.

2. Taste Is Your Only Moat

When anyone can prompt a photorealistic scene using Luma Dream Machine, Veo, Runway, or Midjourney, and when the render button is universally accessible, the technology itself stops being a competitive advantage. The question of craft becomes, what will you put into these dream machines? Sloppy input leads to sloppy output. Just look at what our feeds have become.

What separates a filmmaker from someone mashing “generate” is everything that comes before the prompt and everything that happens after the output: the conceptual architecture, the instinct for what to cut, the understanding of pacing and tone, and why a certain piece of music makes a sequence land differently. And most importantly, when what you’ve generated doesn’t hit the mark.

This is where years of agency rigor and rendering harsh judgment in the name of raising the creative bar pay dividends in ways no one expected. I’ve spent two decades learning how to build narrative tension inside a 60-second broadcast window. Learning how lighting, camera movement, set design, wardrobe, casting, and all the individual disciplines add up to elevate a piece of work. Learning how a single editorial choice can be the difference between a film people share and a film people scroll past.

None of that experience became obsolete when the tools arrived. It became the whole game. Your eye plus your judgement will define you.

3. The Pipeline Is Flat

Traditional production is a strictly linear march: Pre-Production → Production → Post-Production. Each phase is a separate kingdom with its own vendors, timelines, and handoffs. The whole apparatus was designed to manage the complexity of physical production, which required sequences and dependencies almost by necessity.

Post-Permission Cinema compresses all of that into a singular, overlapping workflow. Scripting, storyboarding, scoring, and cutting happen simultaneously and recursively. I’ll be editing a sequence, and it will suggest a narrative direction I hadn’t considered, which sends me back to the script, which changes the shot list before I’ve even “generated” the shots.

The director is no longer managing a logistical army. They are conducting a symphony of algorithms, agents, and enhanced capabilities, and more importantly, they are making artistic decisions at every stage that used to get diluted across a dozen departments and handoffs.

4. The Audience Is the Only Approver

We used to let ideas die quietly in conference rooms. A concept would get as far as a deck, hit a budget wall or an anxious client, and simply disappear, un-made and un-seen, as if it never existed.

Today you build the work. Then you push it directly into the cultural slipstream and let the timeline decide. Creators are already operating this way. Brands and studios will be next. If they’re smart.

The internet is a ruthless and honest editor. If the work is undeniable, it moves. If it doesn’t move, the problem is yours to solve, not a committee’s, not a budget holder’s. That accountability is clarifying in a way that the permission economy never was.

This Isn’t Theory. It’s Already on the Air.

I want to be precise about this because the discourse around AI filmmaking still tends to exist at the level of demos and proofs-of-concept, tech presentations, and Discord threads. What I’m describing has already crossed into the broadcast world.

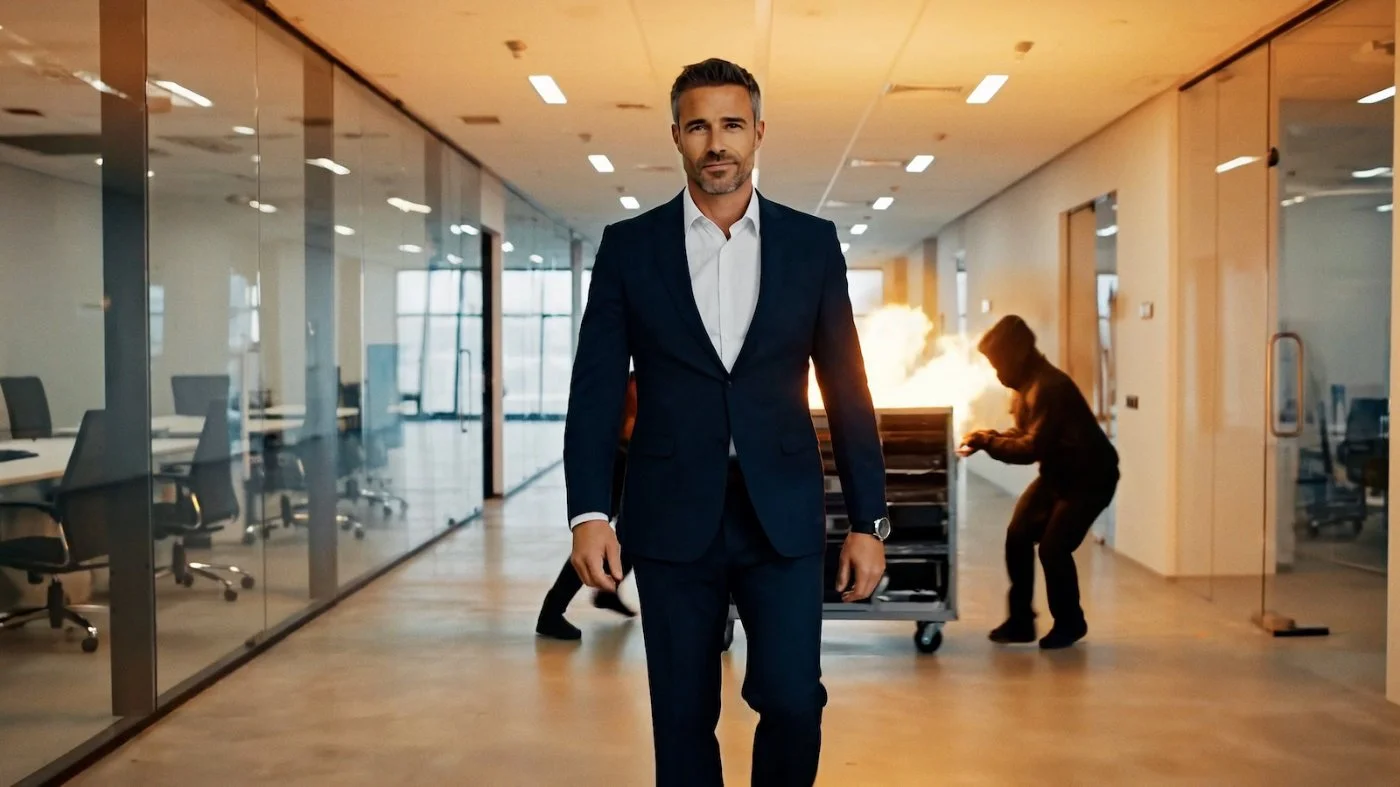

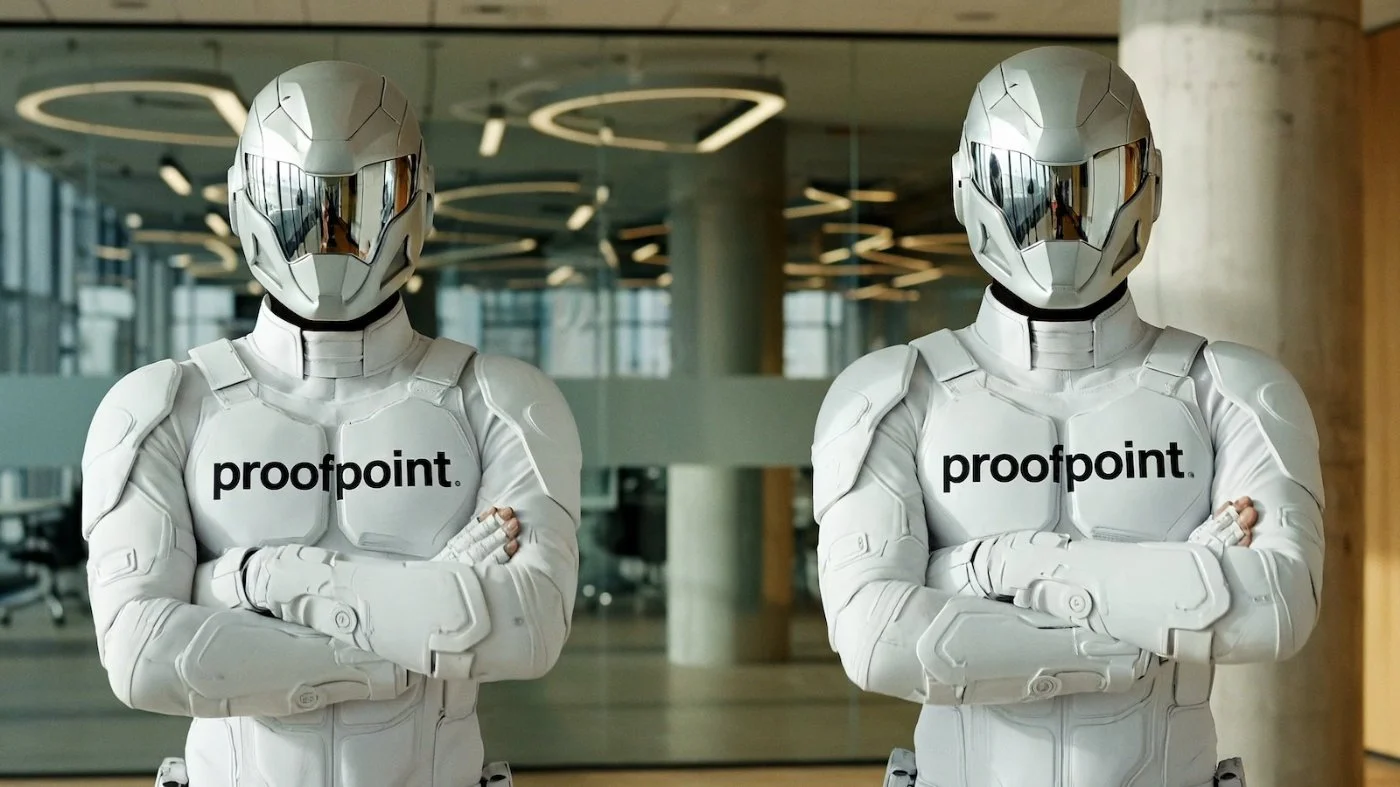

In early 2026, I directed one of the first fully AI-generated commercials to clear network standards and air nationally. A 30-second spot for Proofpoint, produced with ONLYCH1LD, and broadcast on ESPN. I applied these lessons immediately to creating two spots, Luma Taxi and Luma Suds, for the Luma Dream Brief that were selected by an elite creative industry jury, run as real ads, and officially submitted to Cannes Lions. Once again, I am taking these lessons and momentum and putting them right back into practice. No bending the knee. No asking nicely. More firsts are coming.

The tools are ready. The networks are accepting the work. The festivals are beginning to evaluate it on the same stage as everything else. We are in full transition now. There are sea changes happening under the surface that will start manifesting in radically diverse ways.

The era of Post-Permission Cinema is not approaching. It has arrived and now surrounds us.

Why Naming This Matters

Every wave of innovation is first dominated by technical conversations. It took storytelling breakthroughs like Jurassic Park and Pixar’s string of early hits to transform technical achievements into a larger, more human, and compelling conversation.

Right now, AI filmmaking is dominated by tech bros flexing workflows and render hacks. Where are the writers? Where are the storytellers? Where are those trying to go beyond the multitude of Elon Musk remixes and cat memes?

I jumped into these tools early, and I want to start naming what’s going on. I want to start having a more nuanced discussion and move beyond all the triggered trolls in my DMs, copy and pasting the same stale arguments. When you stand up first in an area, you can’t help but provoke debate. But would you rather have the machines go fully autonomous, or have some humans in there, wrestling with the tools and fighting to push beyond the edges? What if creatives took over the tools and conversation from the platform companies, venture-funded labs, and trade press, trying to figure out how to cover it? Creators are getting drowned out and marginalized now. We have to punch back.

Post-Permission Cinema is the term for what we are all living through. It describes the power shift. It describes the pipeline change. And it describes the creative obligation that comes with it: there are no more excuses.

The interesting thing is how it is triggering the top and bottom of the pecking order. The old guard and traditional gatekeepers are shaking, as are the anonymous YouTube commenters and trolls. I’m swimming somewhere in the messy middle.

I’m for the independent artists. For poets and writers and makers trying to make themselves heard. I believe it’s a privilege not to use every tool at your disposal. Does Steven Spielberg have any use for emerging tools? Probably not, unless they serve his vision. However, a filmmaker with 300 subscribers on YouTube is in a different boat. The world is not paying attention yet. And I believe the hungry artists with something to say will do whatever it takes to give their ideas shape.

These tools are for anyone who has ever felt blocked.

For anyone who has been denied access. For those who have not been able to raise funds to make their ideas come to life.

Why spend any more of your career idling at red lights that never turn?

The roads are open now.

Where we’re going, you don’t even need roads. You don’t need a green light. You don’t need a studio. You don’t need a network pickup or an agency brief or a client budget approved in Q1.

You need a vision, a set of tools that are already in your hands, and the discipline to stop waiting for someone to tell you it’s okay.

That permission was never going to come anyway. So stop asking and just make like you’ve never made before.

And as we’re seeing early on, traditional gatekeepers are paying attention and trying to figure out what’s going on. Conversations are happening, and models are shifting.

You can’t control that. But you can control what you make.

So what are you waiting for?

Andrew “Oyl” Miller is an advertising Creative Director, Copywriter, and AI Film Director. He spent 15 years working at Wieden+Kennedy on brands like Nike, PlayStation, MLB, Amazon, and IKEA—and is now one of the first people to direct a fully AI-generated commercial for broadcast television. You can follow his insights and updates on his newsletter.