How a hybrid generative-AI workflow and the Luma Dream Brief turned two solo, cinematic experiments into official Cannes Lions entries.

I made two commercials completely by myself.

And now, thanks to an opportunity from the Luma Dream Brief, they are both headed to Cannes Lions as official entries.

The first spot, Luma Taxi, was born from an idea I’ve had in my head for ages: drop futuristic tech into the Old West, and play it entirely straight. No winking at the camera, no lengthy explanations. Just cowboys and cowgirls operating as early adopters to a new technology and never looking back.

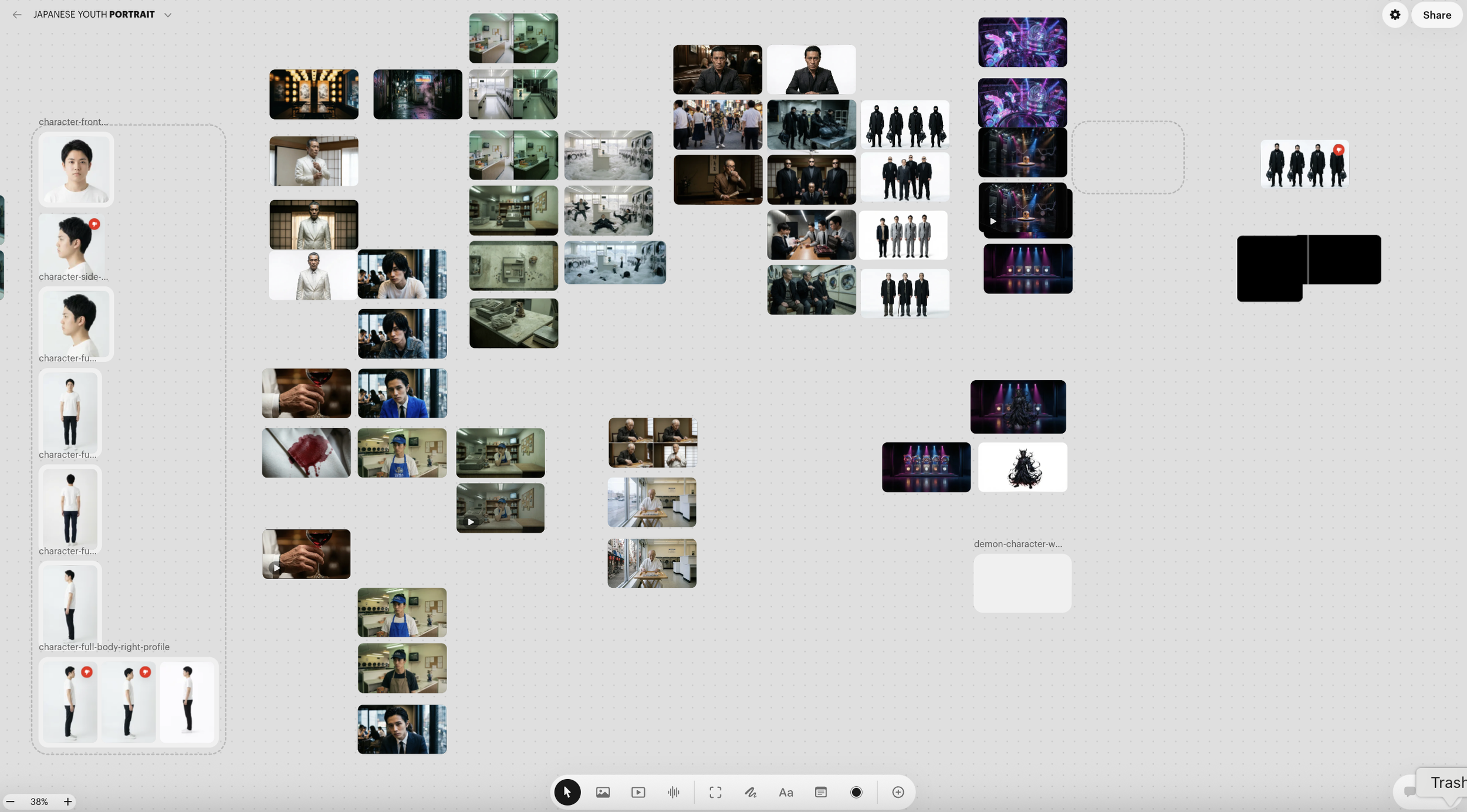

The second spot, Luma Suds, pulls from a deeply personal canon of world-building. Having spent over a decade living in Tokyo, I’ve constantly looked for ways to subvert the gritty, cinematic Japanese crime drama. I wanted to create a laundry detergent commercial with literal life-and-death stakes, living in a dark, criminal underworld where yakuza family members keep lying about the nature of the red stains on their clothes. It’s always that damn “beet root.”

Both of these films were made by me, sitting in front of my laptop, orchestrating every aspect of the generative-AI production.

The era of the one-person studio is a reality now.

Here is a look under the hood at how they came together.

The Blueprint and the Build

Everything started with a script. From there, I wrote a brief outline of the world-building and tone, and uploaded those documents to Luma’s agents.

Then came the visuals. I started by nailing the look of the characters as stills before moving on to the settings. It took a lot of trial and error to achieve the gritty, lived-in, photorealistic worlds I was imagining, but Luma did a pretty incredible job of matching the aesthetic in my head.

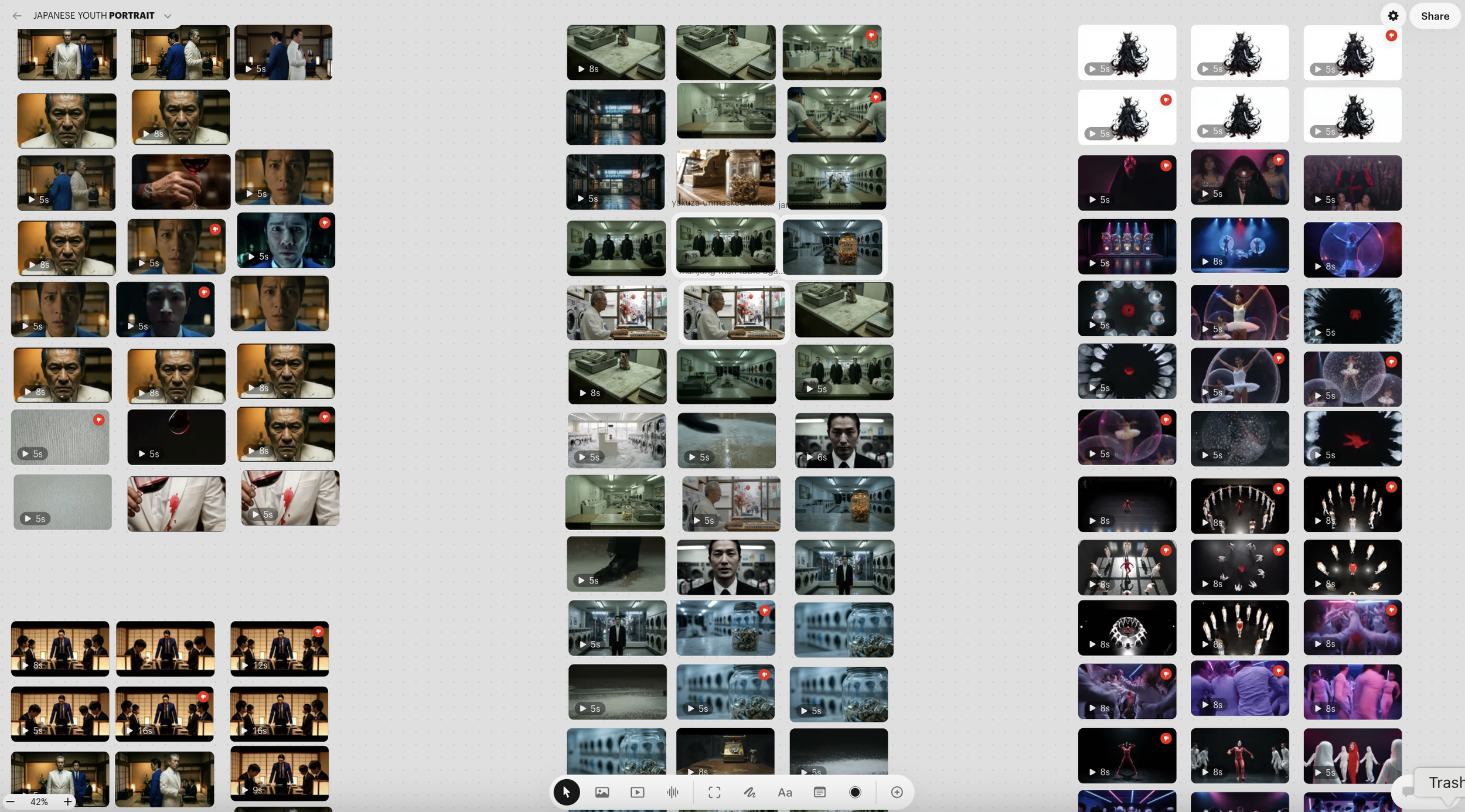

As the visuals developed, I moved into Suno to start working on the music. Because each film plays in a very distinct genre, I had clear guardrails for the sound I wanted. I generated around 15 to 20 tracks, picked my favorites, and dropped them into the timeline on my free trial version of Final Cut Pro.

Once I had a music bed and my early generated footage, I started cobbling together a rough cut

The “Pixar Method” of Gen-AI Directing

My AI directing process is heavily modeled after the Pixar method. In Ed Catmull’s book Creativity, Inc., he lays out a rigorous model for iterating and dialing in animated features. You start with very rough sketches cut together into sequences. Over time, the animation is developed and rendered in higher fidelity, replacing the original sketches. The point is that, from a very early stage, you can start to feel the pulse of your characters and the shape of your film.

I worked the exact same way with these edits.

I watched the rough assemblies over and over, diving into the problem areas, tackling the things that bothered me the most, and making those my priorities. This is where taste comes in. Something that only comes from experience lived and absorbed outside of the prompting box.

Honestly, there were moments I desperately wanted to include that the tools just couldn’t pull off. I wanted snappier back-and-forths between the characters, but AI “actors” aren’t quite there yet. So, I pivoted. I shifted my approach and leaned heavily into visual storytelling.

Iterating in Real-Time

Over the course of a week, I just kept staring at my rough cuts. Once the basic blocks were in place, I started finessing the transitions. Was the music connecting us? Were the cuts satisfying? How could I make it more surprising? These are the exact same questions I’ve been asking for the past 20 years while making commercials the traditional way. So many of those instincts and skills carry over directly into AI; the timeline is just massively compressed. It almost feels like you are rewriting the script over and over again until the film is done.

And in AI, there are no “re-shoots.” If you realize you are missing a shot in the edit, you can generate it and plug it in within minutes.

As the cuts got sharper, I zeroed in on the details. How could I land the end card and the product reveal? Were there facial expressions I wanted to try again? Did I need supporting sound effects? Was the footage getting repetitive? It was time to fine-tune and kill my darlings.

Solving for Story

With Luma Taxi, the spot was entirely driven by the narration. I wanted it to feel like an Old West fable, guided by a gravelly, unreliable narrator telling us exactly how things went down. The voice was designed using ElevenLabs. After about seven variations, I found the exact tone and texture I needed, a voice I definitely want to use again in future projects.

I fine-tuned that voice to match the rhythm of the visuals and the music until it felt seamless. If there was a gap that felt too quiet, I’d write a little more VO to connect the thoughts. My original VO ran long (as they usually do), so I just continued to watch the cut and perfect the narration all the way through production.

With Luma Suds, the trickiest part was executing the opening “problem” trigger: the young yakuza spilling wine on the godfather. I spent dozens of generations trying to crack that scene “in-camera,” but the physics and blocking never worked out. Even when I described exactly what I wanted, the AI actor would do something completely out of pocket, like grabbing the godfather’s arm and violently shaking it to spill the wine. Killing the tension and drama of that moment.

Instead of fighting the physics, I leaned into the reaction shots of the partygoers to tell that part of the story. It actually ended up playing up the gravity and consequence of the moment far better than a direct spill would have.

The Era of Post-Permission Cinema

Looking back at these two spots, the biggest takeaway for me is how much of traditional filmmaking still applies. The tools have changed, but the fundamental need for human instinct hasn’t. For two decades, I’ve relied on taste, timing, and problem-solving to make ideas work. Now, I’m applying those exact same muscles to a generative workflow.

We are stepping into an era of post-permission cinema. You no longer need a massive crew, a sprawling location shoot, or a bloated budget to bring a wild, cinematic idea to life and get it all the way to Cannes. If I had my choice and an unlimited budget, I’d always choose the traditional way. But I am aware that change is coming, and new lanes and forms will emerge from these tools. My approach is to get in there early, deeply learn and test the tools, and help push the boundaries of what is possible.

Most of the early AI work I’ve seen has not been for me. At first, I thought it was just for quick memes and fan fiction featuring Elon Musk. Getting deeper into the tools, I now see that you can make whatever you want. The tech bros will continue making their “Hollywood is cooked” spectacles, but when true artists get behind the keyboard, different tones and voices will be unlocked.

It’s an exciting time for makers. Where your ideas can now go directly into production. No client approvals. No messy group meetings watering things down. No uncomfortable compromises. If you have an idea, you can bring it to life in a very final and polished way. It has collapsed time and stacked disciplines in a way we’ve never seen before.

You just need a laptop, a clear vision, and the willingness to iterate until the story clicks.

The barriers are gone. Now, the only thing you need is a great idea.

Andrew “Oyl” Miller is an advertising Creative Director, Copywriter, and AI Film Director. He spent 15 years working at Wieden+Kennedy on brands like Nike, PlayStation, MLB, Amazon, and IKEA—and is now one of the first people to direct a fully AI-generated commercial for broadcast television. You can follow his insights and updates on his newsletter.